User manual cod nodes old

This document describes how to use the high-performance compute nodes of the cod group at CEES.

Contents

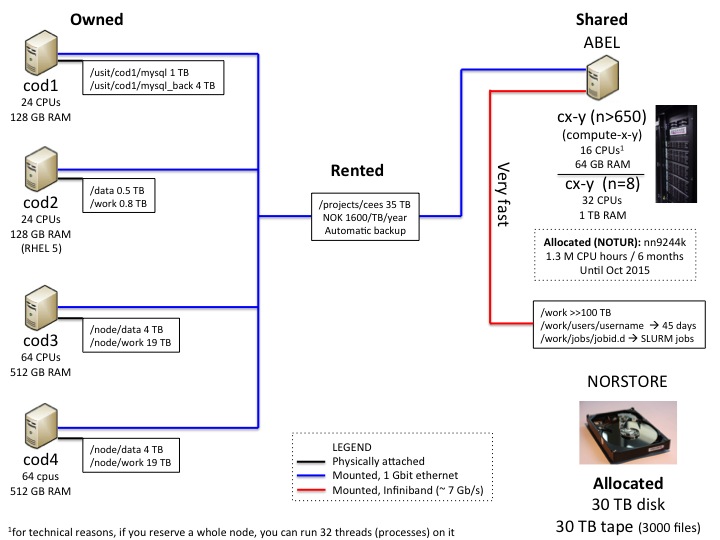

Hardware

We have the following resources available, the use of which is explained on this wiki:

Owned

cod1 24cpus 128 GB of RAM, ~1 TB disk space --> mainly for mysql databases

cod2 24cpus 128 GB of RAM, ~1.3 TB disk space

cod3 64cpus 512 GB of RAM, ~24 TB disk space

cod4 64cpus 512 GB of RAM, ~24 TB disk space

Rented

Diskspace at /projects/cees, at the time of writing 35 TB. We have to pay a yearly fee to use this disk space. See below for how to use this resource.

Shared

On the UiO supercomputer "Abel", we have applied for, and been granted, an allocation for a certain amount of CPU hours. For more detail, see Abel use.

Norstore

Norstore is "a national infrastructure for the management, curation and long-term archiving of digital scientific data." we have applied for, and been granted, an allocation for a certain amount of disk and tape space for archiving data. See below for how to use this resource.

General use

Getting access

Provide Lex Nederbragt with your UiO username.

Mailing list

If you're not already on it, get subscribed to the cod-nodes mailing list: https://sympa.uio.no/bio.uio.no/subscribe/cod-nodes. We use this list to distribute information on the use of the CEES cod nodes.

If you intend to use one of the nodes for an extended period of time, please send an email to this list!

Logging in

To log in type the following command into your command line interface (e.g. Terminal for Mac users)

ssh username@cod1.uio.no

When you are on the UiO network it is enough to write ssh cod1

Check that you are a member of the group 'seq454' by simply typing:

groups

If 'seq454' s not listed, please contact Lex Nederbragt.

Setting up your environment

Making a bash script

First thing you should do before working on the cod nodes is to create a bash script in your home directory (/usit/abel/u1/username), which is the default area after logging in. This is done by using a terminal-based text editor (e.g. nano, emacs etc.)

nano .bash_login

This command opens the text editor where you copy paste the following text:

export PATH=/projects/cees/bin:/projects/cees/scripts:$PATH umask 0002 module use /projects/cees/bin/modules

This is necessary to:

- have access to the commonly used programs and scripts (export PATH=/projects/cees/bin:/projects/cees/scripts:$PATH)

- help with automatically setting permissions to new files and folders (umask 0002)

- use our own modules (module use /projects/cees/bin/modules) - see below.

Save the file and exit the terminal-based text editor.

Advanced tip: To get a fancy prompt add this to your .bash_login file:

magenta=$( tput setaf 5) cyan=$( tput setaf 6) green=$( tput setaf 2) yellow=$( tput setaf 3) reset=$( tput sgr0) bold=$( tput bold) export PS1="\[$magenta\]\t\[\$reset\]-\[\$cyan\]\u\[\$reset\]@\[\$green\]\h:\[\$reset\]\[\$yellow\]\w\[\$reset\]\$ "

Setting up your own project folder(s)

see this link for advice on how to set up a folder for your project in /projects/cees/in_progress

Checking how much disk space is available

On the cod nodes

type

df -h

Look for the /node/work and /node/work partitions

For the /projects/cees area

type

ssh abel.uio.no dusage -p cees

Solving the 'broken pipe' for unstable networks

If you have recurrent problems of ‘broken pipe’ when working on the cod-nodes or abel (meaning you need to log in again after just a couple minutes of inactivity), here is a solution.

Locallly add the following to ~/.ssh/config:

HOSTS* ServerAliveInterval 60

With this setup the connection will be poked every 60 sec, and will therefore be kept alive. Thanks to Tore Oldeide Elgvin for the tip.

Organization of the nodes

All nodes have 'abel' (the UiO supercomputer cluster) disks, and your abel home area mounted to them. So, all the files located in /projects are available on the cod nodes, see below. In addition, the nodes have local discs, currently:

/node/data --> for permanent files, e.g input to your program

/node/work --> working area for your programs - see below for details.

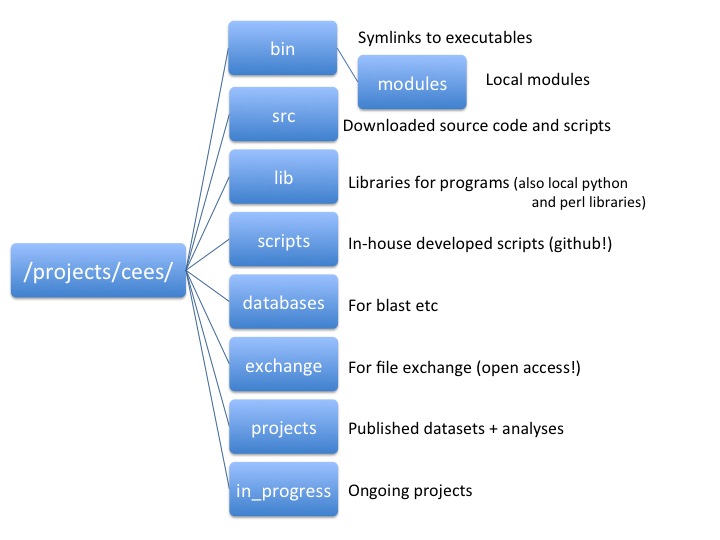

Organization of CEES projects

CEES projects are organised as follows: /projects/cees is the main area. Access is for those in the seq454 unix user group (the name is a relict from when we first started using this area). Please check that files and folders you create have the right permissions:

chgrp -R seq454 yourfolder chmod -R 770 yourfolder chmod -R g+s yourfolder

The last command ensures that the chosen permissions are given to new files and folders as well.

It is possible to restrict access to a subgroup of those that are inn the seq454 group, please ask Lex Nederbragt.

Folders in /projects/cees:

Data storage

Choosing where to work with your data

- do not use your abel home area, you only have 200 GB and you are not meant to share data there with others, e.g. your colleagues

- data on /projects/cees is backed up by USIT, but NOT data on /node/data and /node/work

- reading and writing data to and from /node/data and /node/work will be much faster and efficient than to /projects/cees

- DO NOT USE /projects/cees for data needed as (medium to large) analyses; use /node/work on the cod nodes, $SCRATCH for SLURM jobs, or - if we decide this - /work on Abel

This leads to the following strategy for how to choose which disk to use:

- for something short and quick, eg. less, tar, you can directly work on data in /projects/cees

- for a long running program, or one that generates a lot of data over a long time, use the locally attached /data and /work

- once the long running job is done, you can move the data you want to keep to /projects/cees

- NOTE having your program write a lot over a long time to a file on /projects/cees causes problems for the backup system, as the file may be changed during backup

- NOTE use compression (gzip, pbzip2) where possible!

- for long-term storage of data you do not need regular access to, please use the norstore allocation, see below

Long term storage of data

- long term storage of data you generally do not need to access: norstore tape (time to recover the data: long)

- long term storage of data you may need to access: norstore disk (time to recover the data: intermediate, rsync to working area )

- data from finished publications: appropriate database (e.g. genbank, SRA), datadryad, figshare, or norstore archive

- storage of project data that you need to access occasionally/regularly, finished analyses: /projects/cees/in_progress

Use of norstore

Access

- we have norstore project ID NS9003K

- if you need access to the norstore project, fill out the form at https://www.norstore.no/application-form with project: NS9003K; Project manager: Kjetill Jakobsen

- once you have been added to the user list, please go to https://www.metacenter.no/user/; select 'Reset Password' and ask for an activation key to be sent to your mobile phone

- using the activation key, choose a new password

- the next day, you should be able to log in

- NOTE that Lex would like to hear whether the above actually is working as described...

- command to get into norstore:

ssh login.norstore.uio.no

Organisation of the area

- when you log in, you will be in the folder /norstore_osl/home/username

- our data is stored in /projects/NS9003K

- folder there are:

- 454data, **runsIllumina, **runsPacbio --> from the Norwegian Sequencing Centre, do not touch

- projects --> where you can store your files

In /projects/NS9003K/projects, please use the same foldername as you use on Abel in /projects/cees/in_progress. Add clear README's

To copy files/folders to norstore

NOTE in general it is wise to copy data first, then delete the original. This prevent accidental data loss. Do not use the 'mv' command for big files!

Use rsync, it preserves permissions and timestamps, and allows for finishing an interrupted copy job without having to copy every file again.

TIP use 'screen' (Only possible with option 2)

TIP see note on tarballs and md5 sums below

Option 1: when you are logged in on norstore

cd /projects/NS9003K/projects/path/to/yourfolder rsync -av cod3.uio.no:/projects/cees/in_progress/path/to/folder_to_copy .

NOTE the '.' at the end NOTE adding a trailing slash '/' to the folder_to_copy will only copy its content, not the whole folder!

Option 2: when you are logged in on abel/cod nodes

cd /projects/cees/in_progress/path/where/folder_to_copy/is rsync -av folder_to_copy login.norstore.uio.no:/projects/NS9003K/projects/path/to/yourfolder

NOTE adding a trailing slash '/' to the folder_to_copy will only copy its content, not the whole folder!

Using the norstore tape-storage service

The tape storage is for storing data which is accessed less frequently, or duplicating data stored on a disk.

As we have a limited number of files that can be stored on tape (100 per 1TB of tape space), ALWAYS make a compressed tarball of your data first (i.e., before copying to norstore):

tar -cvzf filename.tgz your_folder

This will collect and compress your_folder, with all files and folders in it (i.e., recursively) into one big file. NOTE please add a clear README to your tarball! NOTE this may take a long time, use 'screen'!

TIP generate an md5 checksum:

md5sum filename.tgz > filename.tgz.md5

This allows for checking whether two files are identical (really and completely). The program generates a long, unique string that is different for each file (one byte difference creates an entirely different string).

NOTE this takes a long time for large files, use 'screen'.

Once your file is on norstore, run the same md5sum command and compare the output. Alternatively, run this command:

md5sum --check filename.tgz.md5

To copy the tarball to tape

NOTE You have to be logged in to norstore for this

WriteToTape filename.tgz NS9003K projects/yourfolder

This will copy the file filename.tgz under /tape/NS9003K/projects/yourfolder

If you will not keep a copy of the file elsewhere, add the '--replicate' flag:

WriteToTape filename.tgz NS9003K projects/yourfolder --replicate

This will, in addition to copying to /tape/NS9003K/projects/yourfolder, create a replica under /replica/NS9003K/projects/yourfolder (on a different tape)

You are asked to confirm the job, and be told you will receive an email once the writing is done (the job is queued so you can close the norstore session if you wish).

To list files stored on tape

lst -la /tape/NS9003K

Delete a file from tape

DeleteFromTape /tape/NS9003K/projects/yourfolder/yourfile.tgz

Copy a file from tape back to the norstore disks

cpt /tape/NS9003K/projects/yourfolder/yourfile.tgz /projects/NS9003K/projects/path/to/yourfolder

List files inside a tarball

tart /tape/NS9003K/projects/yourfolder/yourfile.tgz

Replicating a file which is already on tape

MakeReplica /tape/NS9003K/projects/yourfolder/yourfile.tgz

will request to replicate /tape/NS9003K/projects/yourfolder/yourfile.tgz to /replica/NS9003K/projects/yourfolder/yourfile.tgz

More commands

see https://www.norstore.no/services/tape-storage

Software availability

Software installed on abel

A large number of programs is already installed on abel, and can also be used when logged in to the cod nodes. These programs have been installed and are maintained by people at USIT and are available for all users of abel (not just CEES). This software is available through the 'module' system, which is explained in more detail in this manual. If you're logged in to abel or the cod nodes, typemodule availand you'll see a list of all the available programs (it will take a few seconds until the list is loaded).

Software installed by CEES users

As USIT people are too busy to answer every request for software installation on abel, an increasing amount of software has been installed by CEES users. This software lives in /projects/cees/bin, and in order to keep things organized, we also follow the module system. If you have properly set up your environment (see above), this type of software also shows up in the list that you see when typingmodule avail. You'll see it at the top of the list, under the heading '--- /projects/cees/bin/modules ---', while the modules maintained by USIT appear below, under heading '--- /cluster/etc/modulefiles ---'. Some software in /projects/cees/bin is not yet accessible through modules, but only cause we haven't gotten around to that yet. If you need to use software that's installed in /projects/cees/bin, but has no module associated with it, please contact Lex or Micha, and we'll prepare one.

In addition to software installed in /projects/cees/bin/, two more programs are available locally, on single cod nodes only: The Stacks program for RAD tag analysis runs only on cod1, where it has a mysql server dedicated to it. Thus, in order to run Stacks, log in to cod1 first. On cod2, you'll find the smrtportal software for secondary analysis of PacBio runs.

Software not installed yet

If you need to use software that's not installed on abel and the cod nodes yet, there are two ways to make it available. If you're not the only one who needs this software and it is likely to be used by many researchers, it might be worth asking people at USIT whether they could install it for you. To do so, send an email to hpc-drift@usit.uio.no with a polite request, and they'll see what they can do. If you seem to be the only one interested in this software, or if USIT people are too busy to care, you can install the program yourself, in /projects/cees/bin/, and with the corresponding module. To do so, please see the instructions given here.

Note: all nodes run Red Hat Enterprise Linux version 6, except cod2, which uses version 5 (normally, you will not notice this)

Running software

Note to SLURM users

If you are used to submit jobs through a slurm script, this will not work on the cod nodes. Here you'll have to give the command directly on the command line.

Job scripts

You can use a job script: collect a bunch of commands and put them in an executable file. Run the command with

source yourcommands.sh

Using screen

If you are starting a long running job you cannot close the terminal window because the job will be cancelled. Instead create a 'screen' in the terminal window from which you start to run the job: type

screen

You now started a 'new' terminal. Start your job in this new window. After you have started your job you can detach from this screen by pressing ctrl-a-d (The CTRL key with the 'a' key, followed by the 'd' key). Now you're back in the terminal where you started. You can close this terminal or even the computer and the 'new' terminal will still exist and continue to run your job. You can start multiple hidden windows by just repeating this procedure. If you want to get back into the now hidden screen type

screen -rd

If you have multiple hidden windows this command will give you a list of the windows available. To choose one of the windows just add the window-number after the command.

screen -rd 45142.pts-15.cod4

When your job is finished you need to close the hidden window by typing exit instead of ctrl-a-d

Advanced tip: to make the environment 'inside' the screen more identical to your ususal shell, make a file in your home directory called .screenrc (i.e. /usit/abel/u1/username/.screenrc), with the following content:

shell -$SHELL